How can we trust deep learning models for time series classification when their decision-making remains a "black box"? Can transforming continuous signals into discrete, meaningful patterns be the key to unlocking explainability?

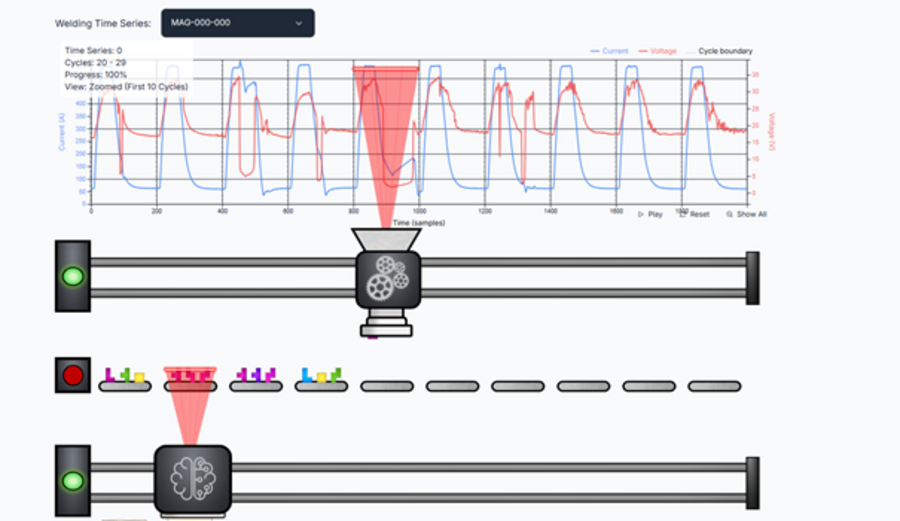

These questions are addressed in our paper, 'EXCODER: EXplainable Classification Of DiscretE time series Representations', written by Yannik Hahn, Antonin Königsfeld, Dr.-Ing. Hasan Tercan and Tobias Meisen, which has been accepted at PAKDD 2026. We investigate how transforming raw time series into discrete latent representations can enhance interpretability in complex domains like industrial monitoring.

Our key findings and contributions are:

• Discrete Latent Representations: We show that compressing time series into discrete codes reduces noise and redundancy, leading to more concise and structured explanations compared to raw data approaches.

• Novel Evaluation Metric (SSA): We introduce Similar Subsequence Accuracy (SSA), a quantitative metric that validates whether XAI-identified patterns actually align with the label distribution in the training data.

• Compact & Faithful: Applying XAI methods (like LIME) to these discrete representations maintains classification performance while producing significantly more compact and stable explanations.

To visualize the concept of our model, we invite you to explore our interactive dashboard: XAI Welding Analysis

The preprint for our paper is now online. You can read the full text here: [2602.13087v1] EXCODER: EXplainable Classification Of DiscretE time series Representations